News

AWS Launches GPU Instances for AI, Big Data Workloads

In a bid to support the most compute-intensive workloads today, Amazon Web Services Inc. (AWS) has launched a new family of Elastic Compute Cloud (EC2) instance types called P2.

Backed by the Tesla K80 GPU line from Nvidia, the new

P2 instances "were designed to chew through tough, large-scale machine learning, deep learning, computational fluid dynamics (CFD) seismic analysis, molecular modeling, genomics and computational finance workloads," said AWS evangelist Jeff Barr in a blog post late last week.

According to Amazon EC2 Vice President Matt Garman, the P2 instances were designed for "heavier GPU compute workloads"

such as high-performance computing (HPC), artificial intelligence (AI) and Big Data processing.

Compared to the g2.8xlarge, the largest instance in AWS' G2 instance family for accelerated computing, the P2 provides up to "seven times the computational capacity for single precision floating point calculations and 60 times more for double precision floating point calculations," Garman said in a statement.

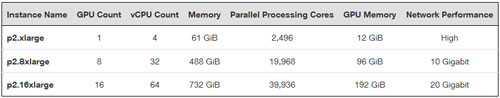

The P2 instances come in three sizes, with the largest, the p2.16xlarge, having 16 physical GPUs, 64 virtual CPUs and 20 Gbps of networking capacity. All three run on Intel's Xeon E5-2686v4 (Broadwell) processor.

[Click on image for larger view.] P2 instances specs. (Source: AWS.)

[Click on image for larger view.] P2 instances specs. (Source: AWS.)

The GPUs support Nvidia's CUDA parallel computing platform (version 7.5 and higher), as well as the OpenCL framework (version 1.2).

Barr noted that the P2 instances also feature ECC memory protection, "allowing them to fix single-bit errors and to detect double-bit errors," as well as enhanced network capabilities through AWS' Enhanced Network Adapter.

On-demand pricing ranges from $0.90 to $14.40 per hour, while Reserved Instance pricing ranges from $0.425 to $6.80 per hour. Currently, P2 instances are available out of AWS' North Virginia, Oregon and Ireland regions.